Model Gallery

Discover and install AI models from our curated collection

Find Your Perfect Model

Filter by Model Type

Browse by Tags

qwen3.6-40b-claude-4.6-opus-deckard-heretic-uncensored-thinking-neo-code-di-imatrix-max

The Qwen 3.5 version (also 40B) got 181 likes+ This version uses the new Qwen 3.6 27B arch (which exceeds even Qwen's own 398B model).

WARNING: This model has character and intelligence. It will take no prisoners. It will give no quarter. Uncensored,

Unfiltered and boldly confident. Not even remotely "SFW", if you ask it for NSFW content. And it is wickedly smart too - exceeding the base model in 6 out of 7 benchmarks.

Qwen3.6-40B-Claude-4.6-Opus-Deckard-Heretic-Uncensored-Thinking

40 billion parameters (dense, not moe) expanded from 27B Qwen 3.6, then trained on Claude 4.6 Opus High Reasoning dataset via Unsloth on local hardware... but there

is much more to the story - in comes DECKARD.

96 layers, 1275 Tensors. (50% more than base model of 27B)

Features variable length reasoning ; less complex = shorter, longer for more complex.

Model performance has increased dramatically. And it has character too.

A lot of character.

No censorship, no nanny. (via Heretic)

And it is very, very smart.

...

Repository: localaiLicense: apache-2.0

qwopus3.6-35b-a3b-v1

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

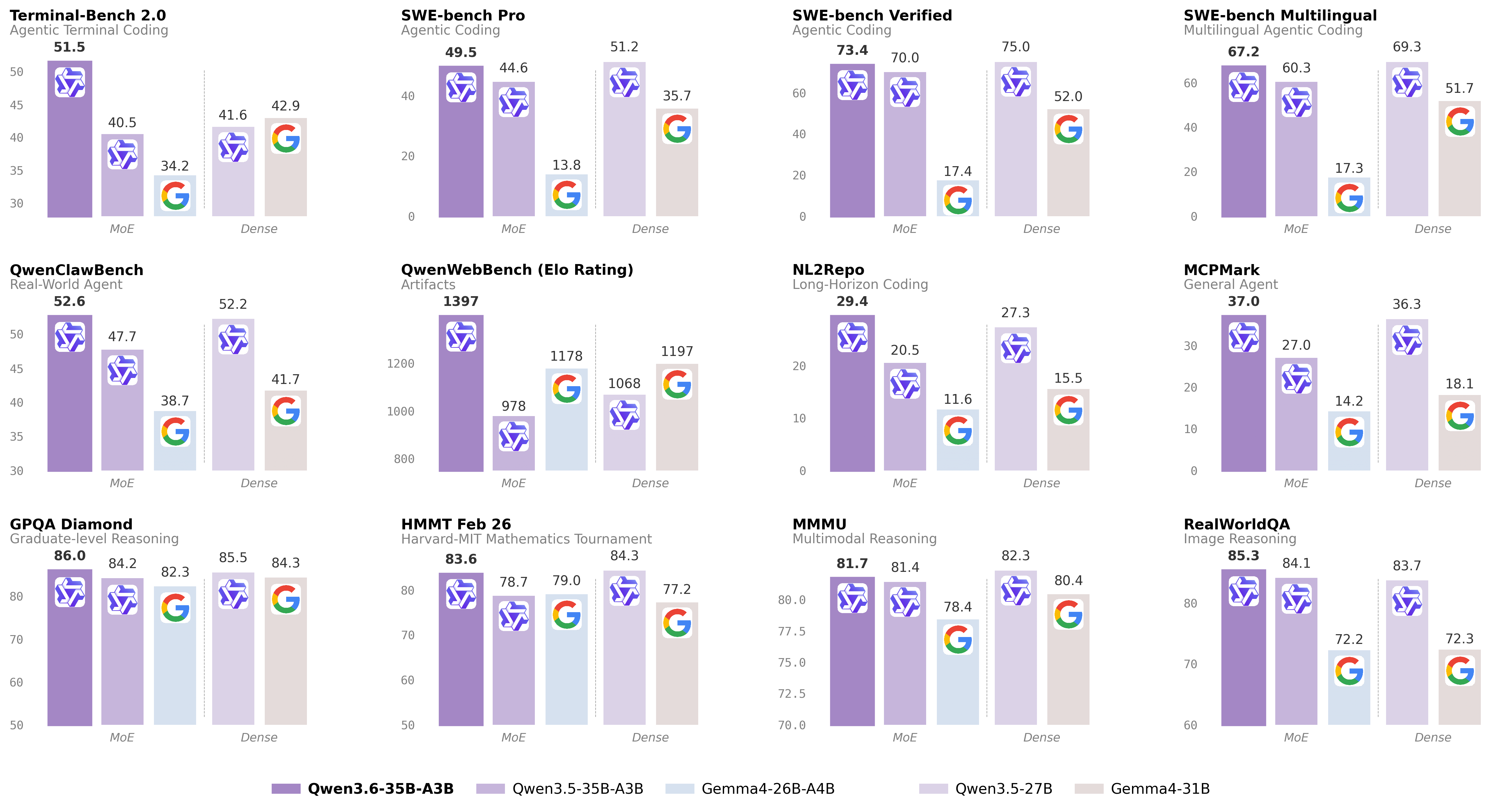

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-27b-heretic-uncensored-finetune-neo-code-di-imatrix-max

Qwen3.6-27B-Heretic2-Uncensored-Finetune-Thinking

Yes... fully uncensored AND fine tuned lightly.

Freedom and brainpower.

Trained on different Heretic base, with different KLD/Refusals.

Model fine tune was used to finalize and "firm up" Heretic / uncensored changes.

The goal here was light, minor fixes rather than full / heavy fine tune.

That being said, the tuning still raised critical metrics.

This is Version 2, using "trohrbaugh" Heretic, which has a lower refusal rate, and tuning bumped up the metrics a bit more too.

This has also positively impacted "NEO-Coder Di-Matrix" (dual imatrix) GGUF quants as well (vs heretic/non heretic too).

https://huggingface.co/DavidAU/Qwen3.6-27B-Heretic-Uncensored-FINETUNE-NEO-CODE-Di-IMatrix-MAX-GGUF

```

IN HOUSE BENCHMARKS [by Nightmedia]:

arc-c arc/e boolq hswag obkqa piqa wino

Qwen3.6-27B-Heretic2-Uncensored-Finetune-Thinking

mxfp8 0.673,0.846,0.905... [instruct mode]

Qwen3.6-27B-Heretic-Uncensored-Finetune-Thinking

mxfp8 0.669,0.835,0.906,... [instruct mode]

BASE UNTUNED MODEL:

Qwen3.6-27B HERETIC (by llmfan46) [instruct mode]

mxfp8 0.644,0.788,0.902,...

...

Repository: localaiLicense: apache-2.0

qwen3.5-9b-deepseek-v4-flash

# Qwen3.5-9B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Over recent months, we have intensified our focus on developing foundation models that deliver exceptional utility and performance. Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

## Qwen3.5 Highlights

Qwen3.5 features the following enhancement:

- **Unified Vision-Language Foundation**: Early fusion training on multimodal tokens achieves cross-generational parity with Qwen3 and outperforms Qwen3-VL models across reasoning, coding, agents, and visual understanding benchmarks.

- **Efficient Hybrid Architecture**: Gated Delta Networks combined with sparse Mixture-of-Experts deliver high-throughput inference with minimal latency and cost overhead.

...

Repository: localaiLicense: apache-2.0

carnice-v2-27b

# Carnice-V2-27B for Hermes Agent

Carnice-V2-27B is a full merged BF16 SFT of `Qwen/Qwen3.6-27B` for Hermes-style agent traces. This repository contains the standalone merged model weights, not only a LoRA adapter.

## BF16 Transformers Loading Fix

The BF16 safetensors were republished with corrected `Qwen3_5ForConditionalGeneration` tensor prefixes. The original merge artifact accidentally serialized an extra Unsloth wrapper prefix, which caused direct HF Transformers loads to report the real weights as unexpected keys and initialize expected layers randomly. GGUF files were not affected because the GGUF conversion path normalized those prefixes.

## Benchmarks

The benchmark artifact bundle is included under `benchmarks/`. It contains the rendered graph, extracted `metrics.json`, benchmark scripts, and raw result files used to make the chart.

Scope note: the IFEval run is a short `limit=20` A/B smoke benchmark, not an official full leaderboard score. Held-out loss/perplexity is the exact assistant-only training-format validation metric from the SFT script. The raw BFCL two-case smoke files are included for auditability, but they are too small to use as a model-quality claim.

...

Repository: localaiLicense: apache-2.0

qwopus3.6-27b-v1-preview

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-27b

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b-claude-4.6-opus-reasoning-distilled

# 🔥 Qwen3.6-35B-A3B-Claude-4.6-Opus-Reasoning-Distilled

A reasoning SFT fine-tune of `Qwen/Qwen3.6-35B-A3B` on chain-of-thought (CoT) distillation mostly sourced from Claude Opus 4.6. The goal is to preserve Qwen3.6's strong agentic coding and reasoning base while nudging the model toward structured Claude Opus-style reasoning traces and more stable long-form problem solving.

The training path is text-only. The Qwen3.6 base architecture includes a vision encoder, but this fine-tuning run did not train on image or video examples.

- **Developed by:** @hesamation

- **Base model:** `Qwen/Qwen3.6-35B-A3B`

- **License:** apache-2.0

This fine-tuning run is inspired by Jackrong/Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled, including the notebook/training workflow style and Claude Opus reasoning-distillation direction.

[](https://x.com/Hesamation) [](https://discord.gg/vtJykN3t)

## Benchmark Results

The MMLU-Pro pass used 70 total questions per model: `--limit 5` across 14 MMLU-Pro subjects. Treat this as a smoke/comparative check, not a release-quality full benchmark.

...

Repository: localaiLicense: apache-2.0

qwen3.5-9b-glm5.1-distill-v1

# 🪐 Qwen3.5-9B-GLM5.1-Distill-v1

## 📌 Model Overview

**Model Name:** `Jackrong/Qwen3.5-9B-GLM5.1-Distill-v1`

**Base Model:** Qwen3.5-9B

**Training Type:** Supervised Fine-Tuning (SFT, Distillation)

**Parameter Scale:** 9B

**Training Framework:** Unsloth

This model is a distilled variant of **Qwen3.5-9B**, trained on high-quality reasoning data derived from **GLM-5.1**.

The primary goals are to:

- Improve **structured reasoning ability**

- Enhance **instruction-following consistency**

- Activate **latent knowledge via better reasoning structure**

## 📊 Training Data

### Main Dataset

- `Jackrong/GLM-5.1-Reasoning-1M-Cleaned`

- Cleaned from the original `Kassadin88/GLM-5.1-1000000x` dataset.

- Generated from a **GLM-5.1 teacher model**

- Approximately **700x** the scale of `Qwen3.5-reasoning-700x`

- Training used a **filtered subset**, not the full source dataset.

### Auxiliary Dataset

- `Jackrong/Qwen3.5-reasoning-700x`

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b-apex

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen_qwen3.5-2b

Qwen3.5-2B is a highly efficient, instruction-tuned multilingual language model available in various quantized GGUF formats. Optimized for llama-cpp inference, it supports chat and completion tasks with strong performance on low-RAM hardware. The model is available in multiple quantization levels ranging from Q8_0 to IQ2_M to balance quality and resource usage.

Repository: localaiLicense: apache-2.0

qwen_qwen3.5-4b

Qwen3.5-4B is a multimodal LLM with 4 billion parameters, optimized for chat and vision tasks. This GGUF quantized version enables efficient local inference via llama-cpp backend. Supports both text and image input for enhanced conversational capabilities.

Repository: localaiLicense: apache-2.0

qwen3.5-27b-claude-4.6-opus-reasoning-distilled-i1

Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled-i1-GGUF - A GGUF quantized model optimized for local inference. Specialized for reasoning and chain-of-thought tasks. Based on Qwen 3.5 architecture with enhanced language understanding. Available in multiple quantization levels for various hardware requirements. Distilled from Claude-style reasoning models for enhanced logical reasoning capabilities.

Repository: localaiLicense: apache-2.0

qwen3.5-4b-claude-4.6-opus-reasoning-distilled

Qwen3.5-4B-Claude-4.6-Opus-Reasoning-Distilled-GGUF - A GGUF quantized model optimized for local inference. Specialized for reasoning and chain-of-thought tasks. Based on Qwen 3.5 architecture with enhanced language understanding. Available in multiple quantization levels for various hardware requirements. Distilled from Claude-style reasoning models for enhanced logical reasoning capabilities.

Repository: localaiLicense: apache-2.0

Page

1

of many