Model Gallery

Discover and install AI models from our curated collection

Find Your Perfect Model

Filter by Model Type

Browse by Tags

qwopus3.6-35b-a3b-v1

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

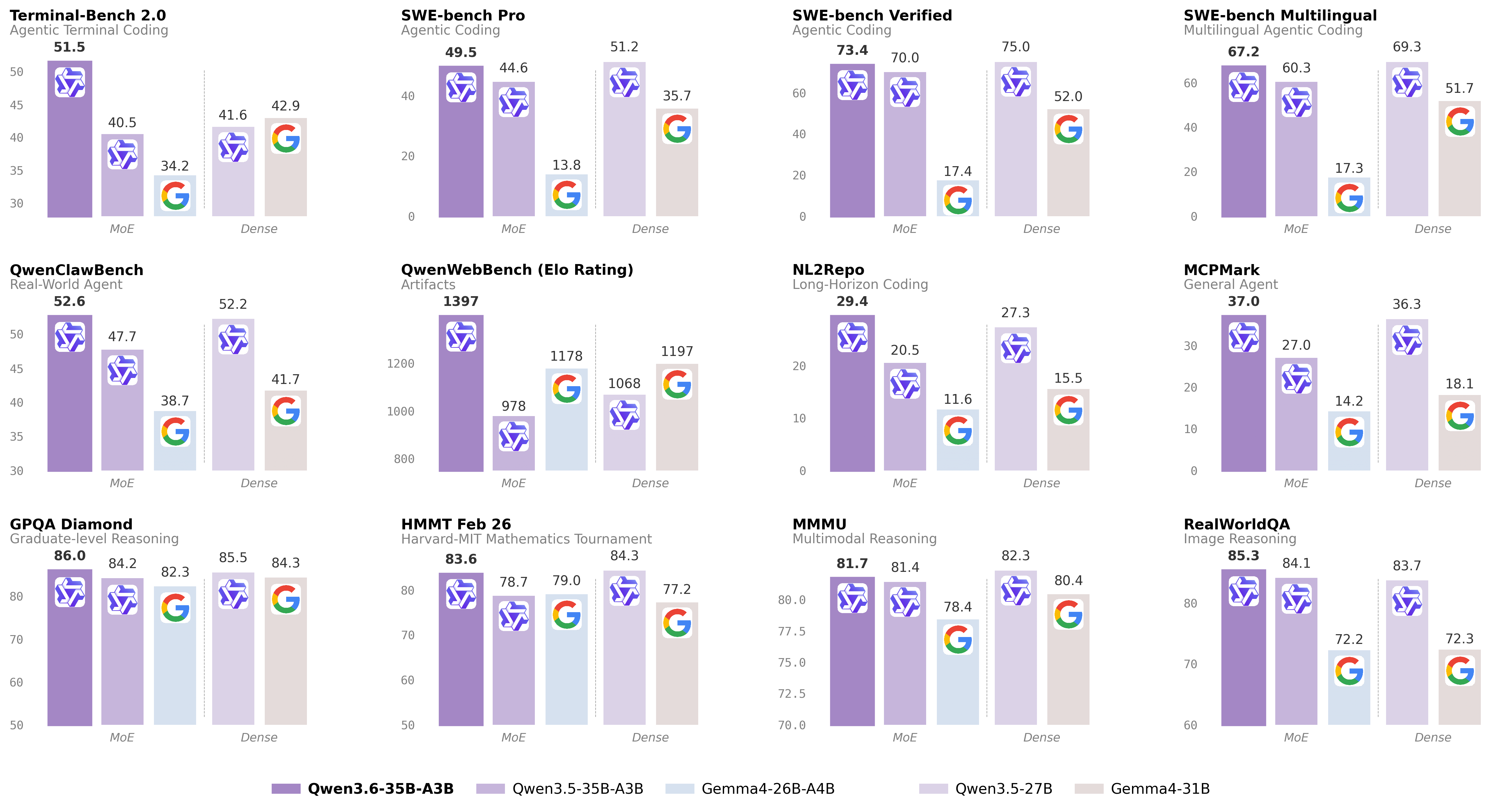

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.5-9b-deepseek-v4-flash

# Qwen3.5-9B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Over recent months, we have intensified our focus on developing foundation models that deliver exceptional utility and performance. Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency.

## Qwen3.5 Highlights

Qwen3.5 features the following enhancement:

- **Unified Vision-Language Foundation**: Early fusion training on multimodal tokens achieves cross-generational parity with Qwen3 and outperforms Qwen3-VL models across reasoning, coding, agents, and visual understanding benchmarks.

- **Efficient Hybrid Architecture**: Gated Delta Networks combined with sparse Mixture-of-Experts deliver high-throughput inference with minimal latency and cost overhead.

...

Repository: localaiLicense: apache-2.0

kimi-k2.6

🤗 huggingchat

|

📰 Tech Blog

## 1. Model Introduction

Kimi K2.6 is an open-source, native multimodal agentic model that advances practical capabilities in long-horizon coding, coding-driven design, proactive autonomous execution, and swarm-based task orchestration.

### Key Features

- **Long-Horizon Coding**: K2.6 achieves significant improvements on complex, end-to-end coding tasks, generalizing robustly across programming languages (Rust, Go, Python) and domains spanning front-end, DevOps, and performance optimization.

- **Coding-Driven Design**: K2.6 is capable of transforming simple prompts and visual inputs into production-ready interfaces and lightweight full-stack workflows, generating structured layouts, interactive elements, and rich animations with deliberate aesthetic precision.

- **Elevated Agent Swarm**: Scaling horizontally to 300 sub-agents executing 4,000 coordinated steps, K2.6 can dynamically decompose tasks into parallel, domain-specialized subtasks, delivering end-to-end outputs from documents to websites to spreadsheets in a single autonomous run.

- **Proactive & Open Orchestration**: For autonomous tasks, K2.6 demonstra

...

Repository: localaiLicense: modified-mit

qwopus3.6-27b-v1-preview

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-27b

# Qwen3.6-27B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-27B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b-apex

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen3.6-35b-a3b

# Qwen3.6-35B-A3B

[](https://chat.qwen.ai)

> [!Note]

> This repository contains model weights and configuration files for the post-trained model in the Hugging Face Transformers format.

>

> These artifacts are compatible with Hugging Face Transformers, vLLM, SGLang, KTransformers, etc.

Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

## Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

- **Agentic Coding:** the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

- **Thinking Preservation:** we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

For more details, please refer to our blog post Qwen3.6-35B-A3B.

## Model Overview

...

Repository: localaiLicense: apache-2.0

qwen_qwen3.5-2b

Qwen3.5-2B is a highly efficient, instruction-tuned multilingual language model available in various quantized GGUF formats. Optimized for llama-cpp inference, it supports chat and completion tasks with strong performance on low-RAM hardware. The model is available in multiple quantization levels ranging from Q8_0 to IQ2_M to balance quality and resource usage.

Repository: localaiLicense: apache-2.0

qwen_qwen3.5-4b

Qwen3.5-4B is a multimodal LLM with 4 billion parameters, optimized for chat and vision tasks. This GGUF quantized version enables efficient local inference via llama-cpp backend. Supports both text and image input for enhanced conversational capabilities.

Repository: localaiLicense: apache-2.0

nanbeige4.1-3b-q8

Nanbeige4.1-3B is built upon Nanbeige4-3B-Base and represents an enhanced iteration of our previous reasoning model, Nanbeige4-3B-Thinking-2511, achieved through further post-training optimization with supervised fine-tuning (SFT) and reinforcement learning (RL). As a highly competitive open-source model at a small parameter scale, Nanbeige4.1-3B illustrates that compact models can simultaneously achieve robust reasoning, preference alignment, and effective agentic behaviors.

Key features:

Strong Reasoning: Capable of solving complex, multi-step problems through sustained and coherent reasoning within a single forward pass, reliably producing correct answers on benchmarks like LiveCodeBench-Pro, IMO-Answer-Bench, and AIME 2026 I.

Robust Preference Alignment: Outperforms same-scale models (e.g., Qwen3-4B-2507, Nanbeige4-3B-2511) and larger models (e.g., Qwen3-30B-A3B, Qwen3-32B) on Arena-Hard-v2 and Multi-Challenge.

Agentic Capability: First general small model to natively support deep-search tasks and sustain complex problem-solving with >500 rounds of tool invocations; excels in benchmarks like xBench-DeepSearch (75), Browse-Comp (39), and others.

Repository: localaiLicense: apache-2.0

deepseek-ai.deepseek-v3.2

This is a quantized version of the DeepSeek-V3.2 model by deepseek-ai, optimized for efficient deployment. It is designed for text generation tasks and supports the pipeline tag `text-generation`. The model is based on the original DeepSeek-V3.2 architecture and is available for use in various applications. For more details, refer to the [official repository](https://github.com/DevQuasar/deepseek-ai.DeepSeek-V3.2-GGUF).

Repository: localai

huihui-glm-4.7-flash-abliterated-i1

The model is a quantized version of **huihui-ai/Huihui-GLM-4.7-Flash-abliterated**, optimized for efficiency and deployment. It uses GGUF files with various quantization levels (e.g., IQ1_M, IQ2_XXS, Q4_K_M) and is designed for tasks requiring low-resource deployment. Key features include:

- **Base Model**: Huihui-GLM-4.7-Flash-abliterated (unmodified, original model).

- **Quantization**: Supports IQ1_M to Q4_K_M, balancing accuracy and efficiency.

- **Use Cases**: Suitable for applications needing lightweight inference, such as edge devices or resource-constrained environments.

- **Downloads**: Available in GGUF format with varying quality and size (e.g., 0.2GB to 18.2GB).

- **Tags**: Abliterated, uncensored, and optimized for specific tasks.

This model is a modified version of the original GLM-4.7, tailored for deployment with quantized weights.

Repository: localaiLicense: mit

mox-small-1-i1

The model, **vanta-research/mox-small-1**, is a small-scale text-generation model optimized for conversational AI tasks. It supports chat, persona research, and chatbot applications. The quantized versions (e.g., i1-Q4_K_M, i1-Q4_K_S) are available for efficient deployment, with the i1-Q4_K_S variant offering the best balance of size, speed, and quality. The model is designed for lightweight inference and is compatible with frameworks like HuggingFace Transformers.

Repository: localaiLicense: apache-2.0

glm-4.7-flash

**GLM-4.7-Flash** is a 30B-A3B MoE (Model Organism Ensemble) model designed for efficient deployment. It outperforms competitors in benchmarks like AIME 25, GPQA, and τ²-Bench, offering strong accuracy while balancing performance and efficiency. Optimized for lightweight use cases, it supports inference via frameworks like vLLM and SGLang, with detailed deployment instructions in the official repository. Ideal for applications requiring high-quality text generation with minimal resource consumption.

Repository: localaiLicense: mit

liquidai.lfm2-2.6b-transcript

This is a large language model (2.6B parameters) designed for text-generation tasks. It is a quantized version of the original model `LiquidAI/LFM2-2.6B-Transcript`, optimized for efficiency while retaining strong performance. The model is built on the foundation of the base model, with additional optimizations for deployment and use cases like transcription or language modeling. It is trained on large-scale text data and supports multiple languages.

Repository: localai

lfm2.5-1.2b-nova-function-calling

The **LFM2.5-1.2B-Nova-Function-Calling-GGUF** is a quantized version of the original model, optimized for efficiency with **Unsloth**. It supports text and multimodal tasks, using different quantization levels (e.g., Q2_K, Q3_K, Q4_K, etc.) to balance performance and memory usage. The model is designed for function calling and is faster than the original version, making it suitable for tasks like code generation, reasoning, and multi-modal input processing.

Repository: localaiLicense: apache-2.0

mistral-nemo-instruct-2407-12b-thinking-m-claude-opus-high-reasoning-i1

The model described in this repository is the **Mistral-Nemo-Instruct-2407-12B** (12 billion parameters), a large language model optimized for instruction tuning and high-level reasoning tasks. It is a **quantized version** of the original model, compressed for efficiency while retaining key capabilities. The model is designed to generate human-like text, perform complex reasoning, and support multi-modal tasks, making it suitable for applications requiring strong language understanding and output.

Repository: localai

rwkv7-g1c-13.3b

The model is **RWKV7 g1c 13B**, a large language model optimized for efficiency. It is quantized using **Bartowski's calibrationv5 for imatrix** to reduce memory usage while maintaining performance. The base model is **BlinkDL/rwkv7-g1**, and this version is tailored for text-generation tasks. It balances accuracy and efficiency, making it suitable for deployment in various applications.

Repository: localaiLicense: apache-2.0

Page

1

of many